Good post on the AI Chip race in the...

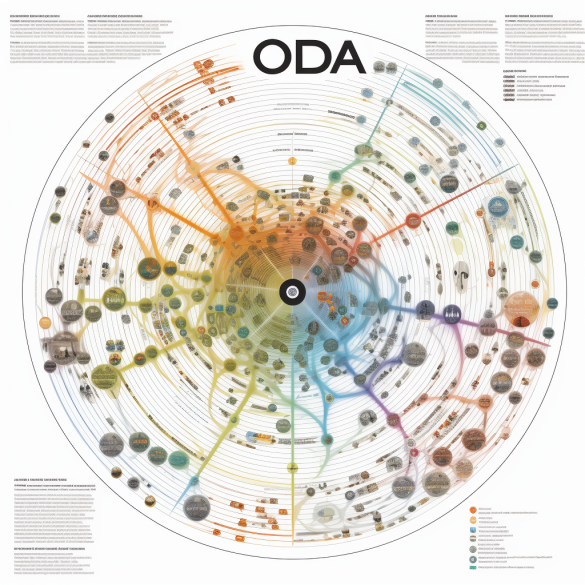

We need to “comprehend, shape, adapt to, and in...

You are likely losing ground if it is not...